You saw the post on Facebook. An industry marketer you’ve followed for a while was announcing their proprietary AI Visibility Engine. Some name that sounds like it belongs in a Pentagon procurement contract. Anthropoid, NeuralStack, something with gravitas. The post included screenshots of their ranking tool, charts shooting up and to the right, testimonials from HVAC companies seeing the kind of lift that looks impossible to argue with. The price wasn’t in the post, but after you slid in their DMs you found out later that the number landed somewhere between $10k-$25k a month.

Here’s the part nobody mentions in the post. The “proprietary engine” is almost always a whitelabeled version of OTTO, a real SEO tool from Search Atlas powered by AI. Which is worth mentioning because it uses AI to automate SEO tasks, saving your agency time and money, but in and of itself is not a magic AI optimization tool doing work to get you ranked in LLMs that is separate from the fundamentals of SEO. Yes, you can now somewhat measure AI visibility in addition to the SERP, and this and other tools support that kind of tracking, but the underlying question is whether that’s achieved through separate scope or specialized tools. You can buy OTTO directly for $99/mo. The Pro plan, which includes whitelabel capabilities so agencies can rebrand it and resell it as their own product, is three hundred ninety-nine a month. The arbitrage is legal, the product is functional, and the markup is roughly two hundred to one.

Maybe you didn’t even reach out to find out pricing. But you thought about it long enough to forward it to whoever does your marketing with a one-line note. Something along the lines of “can we replicate this?rdquo; The answer will always be yes. SEO reporting is one of the most manipulable deliverables in marketing. Agency SEO reporting always goes up, or the narrative gets adapted until it does. But that’s a hot take for another day.

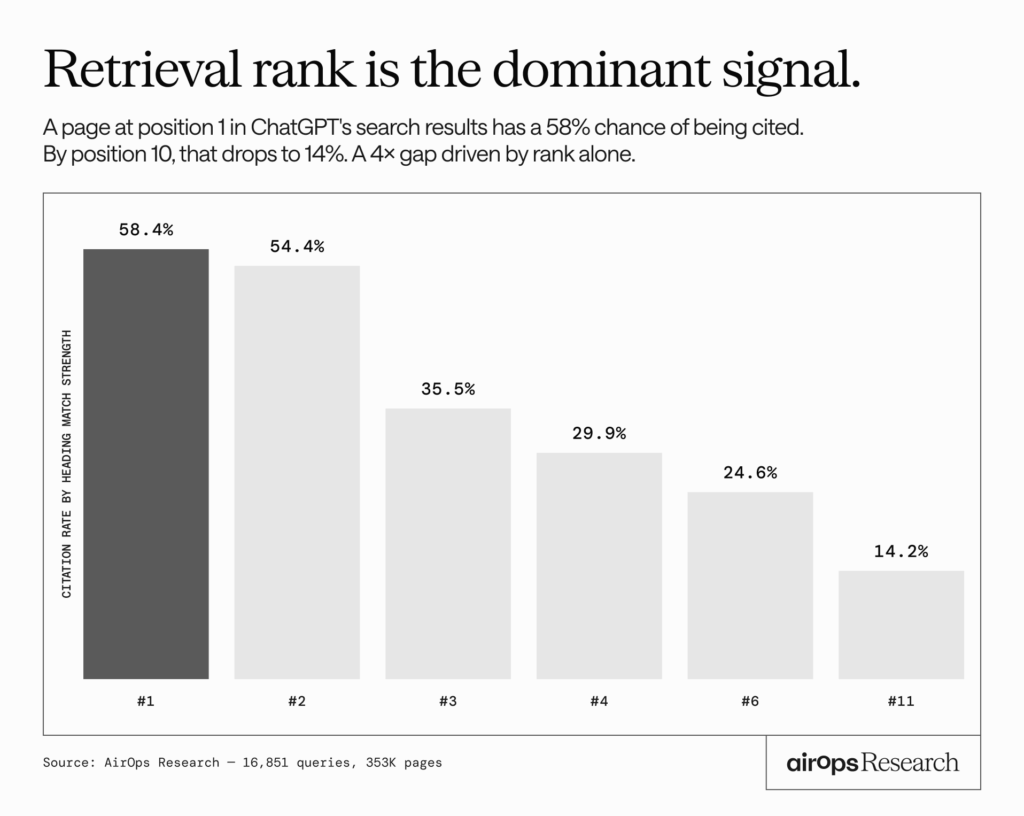

But it’s a fair question. And it’s the question underneath every AEO vs SEO conversation happening in trades marketing right now. The honest answer sits inside an April 2026 analysis by AirOps and Kevin Indig, the biggest public study of AI citation behavior to date. Sixteen thousand unique queries. Three hundred fifty thousand pages. A full trace of what happens between a user’s question and the links ChatGPT cites in its answer. By the end of this piece you’ll know what the study actually shows, what that means for every pitch stacked on top of it, and the five questions worth asking before you wire twenty grand a month for a tool you could buy retail for ninety-nine.

What the acronyms actually mean

Before going further, it helps to name the alphabet.

SEO is search engine optimization. Getting your page to rank in search results. You know this one.

AEO is answer engine optimization. The term originally referred to featured snippets and voice search years before AI, and has since been repurposed to mean getting your content cited in AI-generated answers.

GEO is generative engine optimization. Comes from a November 2023 research paper out of Princeton and Georgia Tech. Focused specifically on how content appears inside generative AI outputs.

AIO is AI optimization. The vaguest of the bunch. Could mean optimizing for AI retrieval, AI training data, AI user experience, or several other things depending on who’s using it. Sometimes the same initials refer to Google’s AI Overviews, which adds another layer of confusion.

LLMO is large language model optimization. Similar territory to GEO, used interchangeably by some practitioners.

There are others. GSO, AISEO, AXO. Google says it’s all just SEO. Bing publishes a sixteen-page AEO guide. Forbes has called AEO “the most dangerous acronym in AI” on the grounds that optimizing for the answer itself, rather than for sources the reader can evaluate, shifts the incentive structure of the open web. The industry hasn’t settled on which term wins, and some of the most thoughtful practitioners in the space have openly written that the acronyms “all mean the same thing.”

That’s the landscape the pitch is arriving into. What matters isn’t the label. What matters is whether the underlying work is genuinely new or whether it’s familiar work dressed in fresh vocabulary. Which is what the data actually helps answer.

What the biggest public study on AI citation actually found

When ChatGPT answers a question, it doesn’t pull the response out of a black box. It runs a series of background web searches, takes the ranked list of pages those searches return, and assembles its answer from what it finds. The AirOps study tracked that entire pipeline across 16,851 queries and 353,799 pages. The findings are consistent, and they point in one direction.

The headline findings

| Signal measured | What the study found |

|---|---|

| Retrieval rank (position in ChatGPT’s underlying search) | Position 1: 58% citation rate. Position 10: 14%. A 4x gap. |

| Heading-to-query match | Strong match: 41% citation rate. Weak match: 29%. |

| JSON-LD schema markup | +6.5 percentage point lift in citation rate |

| Content freshness | Pages 30 to 89 days old outperformed both brand new and aging pages |

| Word count | 500 to 2,000 word pages performed best. 5,000+ word pages underperformed 500-word pages. |

| Focus vs breadth | Pages covering 26 to 50% of subtopics outperformed pages covering 100% |

| Domain authority / backlinks | No positive correlation with citation rate. Slightly negative in some slices. |

The single biggest predictor of getting cited in an AI answer is ranking in the underlying search the AI runs to retrieve its sources. A page at position 1 was cited four times more often than a page at position 10. Once a page is retrieved, the on-page factors that move the needle further are the same factors any competent SEO audit would flag. Headings that match what your customer typed. Schema markup. Focused structure. Recently updated content.

One finding did diverge from classic SEO instinct. Domain authority and backlink count showed no positive correlation with AI citation rate. In some slices, slightly negative. That’s the one genuine shift worth noting. It doesn’t argue for a new discipline. It argues against overweighting backlinks in the SEO you’re already doing.

The retrieval layer is SEO. The heading match is SEO. The schema is SEO. The freshness is SEO. Put the findings side by side with any competent SEO checklist and the overlap is almost total.

The “new discipline” is mostly old fundamentals in new packaging

Pull up any AEO sales deck and the service list will look something like this. Optimize content for conversational queries. Add FAQ schema. Structure content as atomic answers. Refresh content on a cadence. Ensure fast page load times. Add structured data across service pages.

Every one of those items has been documented SEO guidance for years.

Schema markup is not new. Google has published structured data documentation since Schema.org was founded in 2011. Conversational, question-matched headings are not new either. Featured snippet optimization has taught that approach since Google launched featured snippets in January 2014. Content freshness as a ranking factor predates almost everyone in the industry currently selling AEO services.

Page speed, which shows up in almost every AEO pitch deck as a “new” requirement for the AI era, became an explicit Google ranking signal in April 2010. Core Web Vitals were officially weighted as a ranking signal in 2021. Five years before anyone started pitching AEO.

None of this means the work is unimportant. Clean schema, fast pages, focused content, intent-matched headings. This is the actual work of being findable on the internet. The question being raised here is narrower. It’s whether work that has been documented SEO practice for a decade suddenly becomes a separate service line because somebody attached a new acronym to it.

What’s actually different, and what’s actually being sold

Two things about AI retrieval have shifted SEO strategy. They are worth naming honestly before the rest gets dismantled.

The first is that focused content pulls ahead of exhaustive content for AI citations. The ten-thousand-word “ultimate guide” playbook still ranks on Google and still benefits you indirectly because Google ranking drives AI retrieval. But narrower, directly query-matched pages get cited more often once the retrieval happens. That’s a refinement to how you weight depth versus breadth in your SEO planning. It isn’t a new discipline.

The second is that backlinks matter less for AI citation than they do for Google rank. A high-domain-authority site still wins on Google, and winning on Google still wins in AI answers, so the investment still pays. But the citation math doesn’t reward link profiles directly the way it rewards query-matched content. Again, a calibration to existing SEO strategy. Not a new service line.

Neither of these is AEO-only. Both are things a decent SEO vendor should be factoring in anyway. The bar for “genuinely new discipline deserving its own invoice” is higher than “something to keep in mind.”

Now here’s what’s being sold on top of those two refinements.

The “AI-readiness” platform migration

The pitch goes like this. Your current website platform wasn’t built for the AI era. Migrate to Webflow, or Framer, or some other platform marketed as future-proof, and you’ll be set up to win in AI search.

Both Webflow and WordPress can ship fast, clean sites. Modern lightweight WordPress themes like GeneratePress and Kadence are engineered for Core Web Vitals compliance and routinely pass with room to spare when paired with decent hosting. Webflow generates clean semantic HTML by default. Neither platform has a native advantage when it comes to getting cited in AI answers.

The AirOps study measured dozens of variables. The underlying CMS was not one of them, because the retrieval system doesn’t care. It cares about whether the page is fast, clean, retrievable, and well-structured. Every modern CMS can meet that bar.

What a platform migration actually delivers, at its best, is a technical SEO cleanup. Which may be worth doing on its own merits if your current site has five years of plugin debt and load times over three seconds. That’s a real project with a real payoff. That payoff has been on the table since Core Web Vitals became a ranking factor in 2021. Calling it AEO-readiness today is selling the same site speed work that was SEO five years ago, wearing a fresh acronym.

The AI-content tooling markup

OTTO and tools like it do more than one thing. The Atlas-Gravitas version is the whitelabel-and-resell pitch from the intro. The other version is inside your agency’s content pipeline, where AI drafting is reducing the labor cost of producing pages. The data is genuinely neutral on authorship. The retrieval system does not care whether a human or a model wrote the page. It cares what the page says, how it’s structured, and how relevant it is to the query.

The question worth asking isn’t whether AI-assisted content can work. It’s where the time savings are going.

AI tools cut the labor cost of a lot of work a marketing agency does. Drafting, yes, but also technical audits, schema implementation, competitor analysis, reporting, recommendation generation. Done right, those savings get reinvested in the client’s SEO program. More hours on the work that moves their numbers, not fewer hours billed.

Done poorly, the savings get pocketed as margin. The retainer stays the same. The deliverable thins out. And in the worst version, the tools get pointed at output volume instead of output quality, which is exactly the pattern Google just punished.

Google’s March 2026 core update took a clear position on the worst version. Search Engine Land reported that the update targeted scaled content abuse as a primary enforcement priority, with industry analysis documenting traffic drops in the range of 60 to 80 percent for sites publishing AI-generated content at scale without editorial oversight. Affiliate sites took negative impact at a 71 percent rate. HubSpot, one of the most sophisticated content marketing operations in B2B software, saw its blog traffic drop roughly 70 percent across the 2024 to 2025 update cycle after years of publishing broad top-of-funnel content outside its core expertise.

Google named the target explicitly. Scaled content abuse. The policy has existed for a while. The March update turned it into enforcement.

That was six weeks ago. It isn’t a prediction about where Google is heading. It’s a report on what Google just did.

If your agency is using AI tools to reinvest the time savings in your SEO program, that’s probably good news for you. If they’re using those tools to pocket the margin or ship more pages faster, that tooling just got more expensive.

The separate AEO retainer

The third pitch is the simplest. A second monthly invoice, on top of your existing SEO retainer, covering what looks suspiciously like the same work described with different vocabulary.

If the scope reads like rebranded SEO hygiene, schema implementation, content freshness, heading optimization, page speed improvements, it is rebranded SEO hygiene. The question isn’t whether that work matters. It does. The question is whether you’re buying net-new work or paying twice for work that should have been included the first time.

Five questions that separate a real pitch from repackaged work

Before you sign a new invoice for AEO services, or before you accept that your current SEO vendor is already covering it, ask these five questions. The answers will tell you whether you’re buying new work, getting shortchanged on old work, or both.

1. Are my page headings built to match the questions my customers actually ask?

Heading-to-query match is the single biggest content signal in the data. If your service pages read like internal taxonomy rather than customer questions, there’s a real gap, and it should be getting closed as part of any SEO scope.

2. Is my structured data complete, current, and correct across service pages?

Schema adds a measurable lift to citation rate. It’s also SEO table stakes. A vendor charging you extra to add FAQPage, LocalBusiness, or Service schema is charging for table stakes.

3. Are my service pages focused on one job each, or bloated with everything?

This is the one area where AI retrieval genuinely changes SEO strategy. Focused pages outperform comprehensive ones. If your service pages try to cover repair, maintenance, installation, and financing in a single wall of copy, they’re working against you now in a way they weren’t five years ago.

4. What’s our editorial cadence for refreshing content, and what review sits between AI drafting tools and publication?

This question cuts two ways. If the answer on cadence is vague, content freshness isn’t being managed. If the answer on editorial review is vague, the AI-tooling problem Google punished in March is sitting in your content pipeline.

5. What net-new work is included in this AEO scope that isn’t already covered under my current SEO scope?

The one that matters most. Any honest vendor should be able to answer this specifically. If the answer is generic, or if the items named are things your current scope already covers, you have your answer about whether the new invoice is earning its keep.

If you want a broader framework for vetting a marketing agency beyond just AEO, we’ve put the full interview checklist for evaluating a home services marketing agency together elsewhere. The five questions above are a narrow AEO filter. The checklist is the wider lens.

AEO is SEO

Every few years the marketing industry invents a new acronym, wraps it around work most people are already doing, and charges a premium for the rebrand. Social media optimization. Mobile SEO. Voice search optimization. Now AEO, GEO, AIO, LLMO and whichever ones haven’t been launched yet.

The tactics shift, the acronyms cycle, the invoices climb. Underneath it, the work that actually earns results looks roughly the same as it did ten years ago, with some legitimate refinements layered on top, which should be happening anyway.

Marketing is a dynamic field. The strategies and tactics should be too. New things worth paying for come along, and when they do, the right move is to pay for them. AI retrieval is real. Citation behavior is measurable now in ways it wasn’t a year ago. The industry is moving and your marketing should move with it.

The only bar is the one any smart operator already applies to every other vendor conversation. If you’re buying something new, the deliverables should actually be new. If you’re buying something incremental, the scope should be incremental. And if the pitch is priced at thirty times the retail cost of the underlying tool, ask questions. There may be real improved services baked in around it. But it’s a reason to ask specifically what that service is and whether the markup is buying you anything the tool or core service alone wouldn’t.

Ask the questions. Read the studies. Check the retail price of the tool under the rebrand. Buy the things that move your business. Skip the things that move someone else’s.